Senior Project Part 4: Conducting the Survey and the Resulting Data

Throughout the end of January and beginning of February, I was able to conduct the survey and gather data from my subjects through my experiment. I administered the experiment mainly over the first two weekends of February, since weekend survey slots were the most friendly to the subjects in regard to time management and availability. Each subject produced varying results for each of the seven films chosen, with a lot of subjects’ audio perception being completely differing from others and leaving me with a large amount of diverse data to disseminate and draw conclusions from. There were certain parts of the experiment that occurred far differently than expected, especially in terms of the time taken to test each individual subject. Despite these unforeseen changes in expectations, I was surprisingly able to stay on schedule and I feel I got extremely valuable data from each subject regarding their auditory perceptions.

On my first day of testing, I was able to test subjects #1, #2, and #7. Despite it being the very first day, with the most possibilities for complications and unexpected occurrences, I found I was able to get some great experiment highlights from the subjects surveyed. For example, subject #1 chose to stop the horror film audio clip and skip through that film’s video clip because of stressful conditions. As another example, subject #7 had incredible perceptive abilities and was vividly able to envision most of the audio-only clips, understanding the sonic qualities and pinpointing their sources. While most of the testing went well, there was one aspect that continuously proved to be difficult to overcome: the timing of the experiment. On the first day, I expected most subjects to take around an hour for their testing. However, each subject proved to take around 1.5 hours, with the longest subject taking 1 hour and almost 50 minutes. As something I didn’t expect, I was thrown off by this and had to ask some subjects to wait a small period of time to finish up the subject before them. To account for this in the testing days to come, I added an extra 30 minutes between subject testing times and moved some subjects to different days/times in order to spread out the subject among more days.

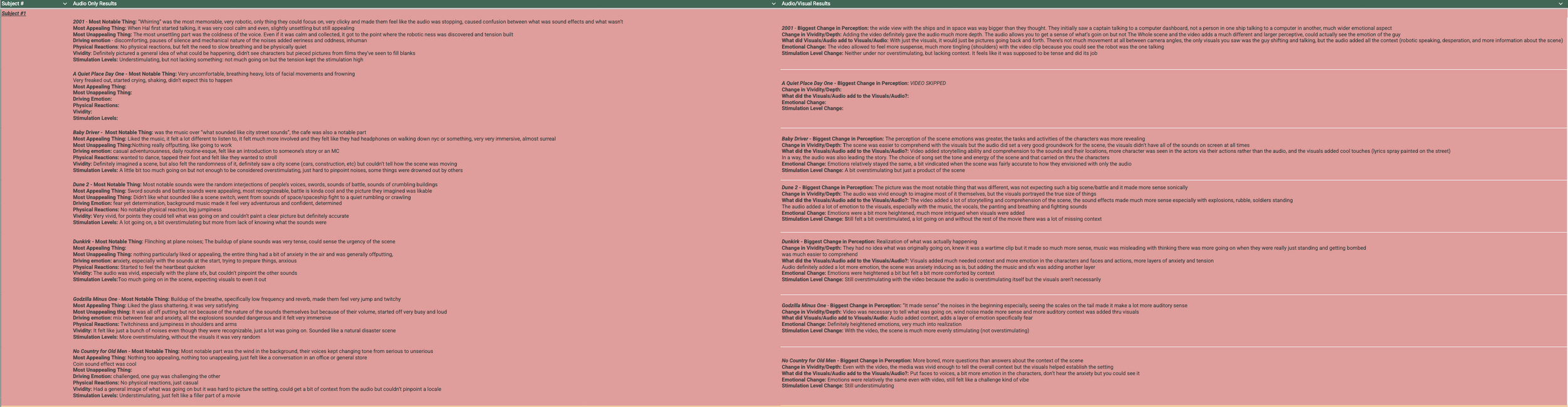

Here are the question answers from Subject #1. As you can see, I recorded as much as I could for both the audio-only and audio-visual responses. Each subject’s resulting data looks quite similar.

My next few days of testing went smoother and smoother as the experiment phase went on. My second day also had some notable highlights in subject perception, like how the coin toss noise from No Country for Old Men had started to become a perceptive trend in the data of all subjects, or how subject #8 had a lot of strong and clear emotions that came to them through their audio perceptions. Despite the timing difficulties, I was able to work around the extra testing length and, by the second week in February, I was had completed 15 hours of experimental testing between each subject.

My method of taking notes for each subject was much faster and more quickened than I initially intended, but I was definitely able to get a lot of descriptive audio perceptions from each subjects. I’ve started compiling the data in a massive spreadsheet that would list the individual subjects 1-10, their answers to the questions I had asked, and my additional observations and conclusions about their perceptions. I’m still currently in the process of moving that information over from one document to another, but that’s expected to be finished up by the end of this current week.

As for the analysis and conclusions to draw from the data, I first plan to note any additional observations I had for each subject outside of their question answers. I plan to analyze and rank each of the seven films on a scale of immersion, or in other words, how involved each subject felt in the audio/film. This means ranking the films on how much each subject felt they actually were a part of the audio or cinematic universe. From this, I want to analyze what audio or film characteristics instill immersion within the films. What exactly makes film #2 more immersive than film #4? Why were so many subjects immersed in film #6 but barely anyone was immersed in film #1? By answering questions like these, I feel I’ll be able to come up with a solidified conclusion on what audio/film characteristics build the thickest levels of media immersion. Knowing this, I can work to practice it within my own sound design.